(Redirected from Poisson law of large numbers)

The horizontal axis is the index k, the number of occurrences. λ is the expected number of occurrences, which need not be an integer. The vertical axis is the probability of k occurrences given λ. The function is defined only at integer values of k. The connecting lines are only guides for the eye. | |||

The horizontal axis is the index k, the number of occurrences. The CDF is discontinuous at the integers of k and flat everywhere else because a variable that is Poisson distributed takes on only integer values. | |||

| Notation | |||

|---|---|---|---|

| Parameters | (rate) | ||

| Support | |||

| pmf | |||

| CDF | , or , or (for , where is the upper incomplete gamma function, is the floor function, and Q is the regularized gamma function) | ||

| Mean | |||

| Median | |||

| Mode | |||

| Variance | |||

| Skewness | |||

| Ex. kurtosis | |||

| Entropy | (for large ) | ||

| MGF | |||

| CF | |||

| PGF | |||

| Fisher information | |||

In probability theory and statistics, the Poisson distribution (French pronunciation: [pwasɔ̃]; in English often rendered /ˈpwɑːsɒn/), named after French mathematician Siméon Denis Poisson, is a discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time or space if these events occur with a known constant rate and independently of the time since the last event.[1] The Poisson distribution can also be used for the number of events in other specified intervals such as distance, area or volume.

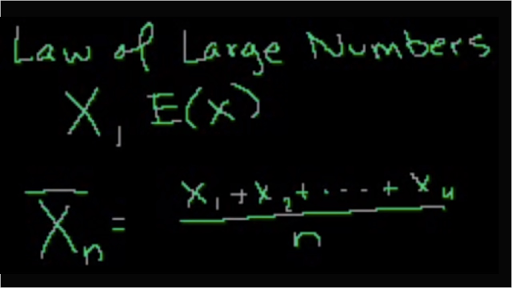

The Strong Law of Large Numbers Reading: Grimmett-Stirzaker 7.2; David Williams “Probability with Martingales” 7.2 Further reading: Grimmett-Stirzaker 7.1, 7.3-7.5 With the Convergence Theorem (Theorem 54) and the Ergodic Theorem (Theorem 55) we have two very different statements of convergence of something to a stationary distribution. The law of large numbers says that for large m, the rescaled partial sum process. The weak LLN makes this precise in the sense of convergence in distribution, and the strong LLN gives almost sure convergence. But the speed of convergence is obviously not uniform over all distributions of the underlying IID random variables.

For instance, an individual keeping track of the amount of mail they receive each day may notice that they receive an average number of 4 letters per day. If receiving any particular piece of mail does not affect the arrival times of future pieces of mail, i.e., if pieces of mail from a wide range of sources arrive independently of one another, then a reasonable assumption is that the number of pieces of mail received in a day obeys a Poisson distribution.[2] Other examples that may follow a Poisson distribution include the number of phone calls received by a call center per hour and the number of decay events per second from a radioactive source.

- 1Basics

- 1.3Probability of events for a Poisson distribution

- 4Properties

- 6Occurrence

- 8Parameter estimation

- 10Computer software for the Poisson distribution

- 12References

Basics[edit]

The Poisson distribution is popular for modelling the number of times an event occurs in an interval of time or space.

Example[edit]

The Poisson distribution may be useful to model events such as

- The number of meteorites greater than 1 meter diameter that strike Earth in a year

- The number of patients arriving in an emergency room between 10 and 11 pm

- The number of photons hitting a detector in a particular time interval

Assumptions and validity[edit]

The Poisson distribution is an appropriate model if the following assumptions are true:[3]

- k is the number of times an event occurs in an interval and k can take values 0, 1, 2, ....

- The occurrence of one event does not affect the probability that a second event will occur. That is, events occur independently.

- The average rate at which events occur is constant.

- Two events cannot occur at exactly the same instant; instead, at each very small sub-interval exactly one event either occurs or does not occur.

- Or

- The actual probability distribution is given by a binomial distribution and the number of trials is sufficiently bigger than the number of successes one is asking about (see Related distributions).

If these conditions are true, then k is a Poisson random variable, and the distribution of k is a Poisson distribution.

Probability of events for a Poisson distribution[edit]

An event can occur 0, 1, 2, ... times in an interval. The average number of events in an interval is designated (lambda). is the event rate, also called the rate parameter. The probability of observing k events in an interval is given by the equation

where

- is the average number of events per interval

- e is the number 2.71828... (Euler's number) the base of the natural logarithms

- k takes values 0, 1, 2, ...

- k! = k × (k − 1) × (k − 2) × ... × 2 × 1 is the factorial of k.

This equation is the probability mass function (PMF) for a Poisson distribution.

This equation can be adapted if, instead of the average number of events , we are given a time rate for the events to happen. Then (with in units of 1/time), and

Examples of probability for Poisson distributions[edit]

On a particular river, overflow floods occur once every 100 years on average. Calculate the probability of k = 0, 1, 2, 3, 4, 5, or 6 overflow floods in a 100-year interval, assuming the Poisson model is appropriate. Because the average event rate is one overflow flood per 100 years, λ = 1 | The table below gives the probability for 0 to 6 overflow floods in a 100-year period.

|

Ugarte and colleagues report that the average number of goals in a World Cup soccer match is approximately 2.5 and the Poisson model is appropriate.[4]Because the average event rate is 2.5 goals per match, λ = 2.5. | The table below gives the probability for 0 to 7 goals in a match.

|

Once in an interval events: The special case of λ = 1 and k = 0[edit]

Suppose that astronomers estimate that large meteorites (above a certain size) hit the earth on average once every 100 years (λ = 1 event per 100 years), and that the number of meteorite hits follows a Poisson distribution. What is the probability of k = 0 meteorite hits in the next 100 years?

Under these assumptions, the probability that no large meteorites hit the earth in the next 100 years is roughly 0.37. The remaining 1 − 0.37 = 0.63 is the probability of 1, 2, 3, or more large meteorite hits in the next 100 years.In an example above, an overflow flood occurred once every 100 years (λ = 1). The probability of no overflow floods in 100 years was roughly 0.37, by the same calculation.

In general, if an event occurs on average once per interval (λ = 1), and the events follow a Poisson distribution, then P(0 events in next interval) = 0.37. In addition, P(exactly one event in next interval) = 0.37, as shown in the table for overflow floods.

Examples that violate the Poisson assumptions[edit]

The number of students who arrive at the student union per minute will likely not follow a Poisson distribution, because the rate is not constant (low rate during class time, high rate between class times) and the arrivals of individual students are not independent (students tend to come in groups).

The number of magnitude 5 earthquakes per year in a country may not follow a Poisson distribution if one large earthquake increases the probability of aftershocks of similar magnitude.

Among patients admitted to the intensive care unit of a hospital, the number of days that the patients spend in the ICU is not Poisson distributed because the number of days cannot be zero. The distribution may be modeled using a Zero-truncated Poisson distribution.

Count distributions in which the number of intervals with zero events is higher than predicted by a Poisson model may be modeled using a Zero-inflated model.

Poisson regression and negative binomial regression[edit]

Poisson regression and negative binomial regression are useful for analyses where the dependent (response) variable is the count (0, 1, 2, ...) of the number of events or occurrences in an interval.

History[edit]

The distribution was first introduced by Siméon Denis Poisson (1781–1840) and published, together with his probability theory, in 1837 in his work Recherches sur la probabilité des jugements en matière criminelle et en matière civile ('Research on the Probability of Judgments in Criminal and Civil Matters').[5]:206 The work theorized about the number of wrongful convictions in a given country by focusing on certain random variablesN that count, among other things, the number of discrete occurrences (sometimes called 'events' or 'arrivals') that take place during a time-interval of given length. The result had already been given in 1711 by Abraham de Moivre in De Mensura Sortis seu; de Probabilitate Eventuum in Ludis a Casu Fortuito Pendentibus ('On the Measurement of Chance, or, on the Probability of Events in Games Depending Upon Furtious Chance').[6]:219[7]:14-15[8]:193[9]:157 This makes it an example of Stigler's law and it has prompted some authors to argue that the Poisson distribution should bear the name of de Moivre.[10][11]

In 1860, Simon Newcomb fitted the Poisson distribution to the number of stars found in a unit of space.[12]A further practical application of this distribution was made by Ladislaus Bortkiewicz in 1898 when he was given the task of investigating the number of soldiers in the Prussian army killed accidentally by horse kicks;[13]:23-25 this experiment introduced the Poisson distribution to the field of reliability engineering.

Definition[edit]

A discrete random variableX is said to have a Poisson distribution with parameter λ > 0, if, for k = 0, 1, 2, ..., the probability mass function of X is given by:[14]:60

where

- e is Euler's number (e = 2.71828...)

- k! is the factorial of k.

The positive real numberλ is equal to the expected value of X and also to its variance[15]

The Poisson distribution can be applied to systems with a large number of possible events, each of which is rare. How many such events will occur during a fixed time interval? Under the right circumstances, this is a random number with a Poisson distribution.

Properties[edit]

Descriptive statistics[edit]

- The expected value and variance of a Poisson-distributed random variable are both equal to λ.

- The coefficient of variation is , while the index of dispersion is 1.[9]:157

- The mean absolute deviation about the mean is[9]:157

- The mode of a Poisson-distributed random variable with non-integer λ is equal to , which is the largest integer less than or equal to λ. This is also written as floor(λ). When λ is a positive integer, the modes are λ and λ − 1.

- All of the cumulants of the Poisson distribution are equal to the expected value λ. The nth factorial moment of the Poisson distribution is λn.

- The expected value of a Poisson process is sometimes decomposed into the product of intensity and exposure (or more generally expressed as the integral of an 'intensity function' over time or space, sometimes described as “exposure”).[16]

Median[edit]

Bounds for the median () of the distribution are known and are sharp:[17]

Higher moments[edit]

- The higher momentsmk of the Poisson distribution about the origin are Touchard polynomials in λ:

- where the {braces} denote Stirling numbers of the second kind.[18][1]:6 The coefficients of the polynomials have a combinatorial meaning. In fact, when the expected value of the Poisson distribution is 1, then Dobinski's formula says that the nth moment equals the number of partitions of a set of size n.

For the non-centered moments we define , then[19]

where is some absolute constant greater than 0.

Sums of Poisson-distributed random variables[edit]

- If for are independent, and , then .[20]:65 A converse is Raikov's theorem, which says that if the sum of two independent random variables is Poisson-distributed, then so are each of those two independent random variables.[21][22]

Other properties[edit]

- The Poisson distributions are infinitely divisible probability distributions.[23]:233[9]:159

- The directed Kullback–Leibler divergence of from is given by

- Bounds for the tail probabilities of a Poisson random variable can be derived using a Chernoff bound argument.[24]:97-98

- ,

- Inequalities that relate the distribution function of a Poisson random variable to the standard Normal distribution function are as follows:[25]

- Where is the directed Kullback–Leibler divergence, as described above.

Poisson races[edit]

Let and be independent random variables, with , then we have that

The upper bound is proved using a standard Chernoff bound.

The lower bound can be proved by noting that is the probability that , where , which is bounded below by , where is relative entropy (See the entry on bounds on tails of binomial distributions for details). Further noting that , and computing a lower bound on the unconditional probability gives the result. More details can be found in the appendix of Kamath et al..[26]

Poisson Approximation[edit]

Assume where , then[27] is Multinomially Distributed conditioned on .

This means[24]:101-102, among other things, that for any nonnegative function ,if is multinomially distributed, then

where .

The factor of can be removed if is further assumed to be monotonically increasing or decreasing.

Related distributions[edit]

- If and are independent, then the difference follows a Skellam distribution.

- If and are independent, then the distribution of conditional on is a binomial distribution.

- Specifically, given , .

- More generally, if X1, X2,..., Xn are independent Poisson random variables with parameters λ1, λ2,..., λn then

- given . In fact, .

- If and the distribution of , conditional on X = k, is a binomial distribution, , then the distribution of Y follows a Poisson distribution . In fact, if , conditional on X = k, follows a multinomial distribution, , then each follows an independent Poisson distribution .

- The Poisson distribution can be derived as a limiting case to the binomial distribution as the number of trials goes to infinity and the expected number of successes remains fixed — see law of rare events below. Therefore, it can be used as an approximation of the binomial distribution if n is sufficiently large and p is sufficiently small. There is a rule of thumb stating that the Poisson distribution is a good approximation of the binomial distribution if n is at least 20 and p is smaller than or equal to 0.05, and an excellent approximation if n ≥ 100 and np ≤ 10.[28]

- The Poisson distribution is a special case of the discrete compound Poisson distribution (or stuttering Poisson distribution) with only a parameter.[29][30] The discrete compound Poisson distribution can be deduced from the limiting distribution of univariate multinomial distribution. It is also a special case of a compound Poisson distribution.

- For sufficiently large values of λ, (say λ>1000), the normal distribution with mean λ and variance λ (standard deviation ) is an excellent approximation to the Poisson distribution. If λ is greater than about 10, then the normal distribution is a good approximation if an appropriate continuity correction is performed, i.e., if P(X ≤ x), where x is a non-negative integer, is replaced by P(X ≤ x + 0.5).

- Variance-stabilizing transformation: When a variable is Poisson distributed, its square root is approximately normally distributed with expected value of about and variance of about 1/4.[31]:196[9]:163 Under this transformation, the convergence to normality (as λ increases) is far faster than the untransformed variable.[citation needed] Other, slightly more complicated, variance stabilizing transformations are available,[9]:163 one of which is Anscombe transform. See Data transformation (statistics) for more general uses of transformations.

- If for every t > 0 the number of arrivals in the time interval [0, t] follows the Poisson distribution with mean λt, then the sequence of inter-arrival times are independent and identically distributed exponential random variables having mean 1/λ.[32]:317–319

- The cumulative distribution functions of the Poisson and chi-squared distributions are related in the following ways:[9]:171

- and[9]:153

Occurrence[edit]

Applications of the Poisson distribution can be found in many fields including:[33]

- Telecommunication example: telephone calls arriving in a system.

- Astronomy example: photons arriving at a telescope.

- Chemistry example: the molar mass distribution of a living polymerization.[34]

- Biology example: the number of mutations on a strand of DNA per unit length.

- Management example: customers arriving at a counter or call centre.

- Finance and insurance example: number of losses or claims occurring in a given period of time.

- Earthquake seismology example: an asymptotic Poisson model of seismic risk for large earthquakes.[35]

- Radioactivity example: number of decays in a given time interval in a radioactive sample.

The Poisson distribution arises in connection with Poisson processes. It applies to various phenomena of discrete properties (that is, those that may happen 0, 1, 2, 3, ... times during a given period of time or in a given area) whenever the probability of the phenomenon happening is constant in time or space. Examples of events that may be modelled as a Poisson distribution include:

- The number of soldiers killed by horse-kicks each year in each corps in the Prussian cavalry. This example was used in a book by Ladislaus Bortkiewicz (1868–1931).[13]:23-25

- The number of yeast cells used when brewing Guinness beer. This example was used by William Sealy Gosset (1876–1937).[36][37]

- The number of phone calls arriving at a call centre within a minute. This example was described by A.K. Erlang (1878–1929).[38]

- Internet traffic.

- The number of goals in sports involving two competing teams.[39]

- The number of deaths per year in a given age group.

- The number of jumps in a stock price in a given time interval.

- Under an assumption of homogeneity, the number of times a web server is accessed per minute.

- The number of mutations in a given stretch of DNA after a certain amount of radiation.

- The proportion of cells that will be infected at a given multiplicity of infection.

- The number of bacteria in a certain amount of liquid.[40]

- The arrival of photons on a pixel circuit at a given illumination and over a given time period.

- The targeting of V-1 flying bombs on London during World War II investigated by R. D. Clarke in 1946.[41]

Gallagher showed in 1976 that the counts of prime numbers in short intervals obey a Poisson distribution[42] provided a certain version of the unproved prime r-tuple conjecture of Hardy-Littlewood[43] is true.

Law of rare events[edit]

Comparison of the Poisson distribution (black lines) and the binomial distribution with n = 10 (red circles), n = 20 (blue circles), n = 1000 (green circles). All distributions have a mean of 5. The horizontal axis shows the number of events k. As n gets larger, the Poisson distribution becomes an increasingly better approximation for the binomial distribution with the same mean.

The rate of an event is related to the probability of an event occurring in some small subinterval (of time, space or otherwise). In the case of the Poisson distribution, one assumes that there exists a small enough subinterval for which the probability of an event occurring twice is 'negligible'. With this assumption one can derive the Poisson distribution from the Binomial one, given only the information of expected number of total events in the whole interval. Let this total number be . Divide the whole interval into subintervals of equal size, such that > (since we are interested in only very small portions of the interval this assumption is meaningful). This means that the expected number of events in an interval for each is equal to . Now we assume that the occurrence of an event in the whole interval can be seen as a Bernoulli trial, where the trial corresponds to looking whether an event happens at the subinterval with probability . The expected number of total events in such trials would be , the expected number of total events in the whole interval. Hence for each subdivision of the interval we have approximated the occurrence of the event as a Bernoulli process of the form . As we have noted before we want to consider only very small subintervals. Therefore, we take the limit as goes to infinity.In this case the binomial distribution converges to what is known as the Poisson distribution by the Poisson limit theorem.

In several of the above examples—such as, the number of mutations in a given sequence of DNA—the events being counted are actually the outcomes of discrete trials, and would more precisely be modelled using the binomial distribution, that is

In such cases n is very large and p is very small (and so the expectation np is of intermediate magnitude). Then the distribution may be approximated by the less cumbersome Poisson distribution[citation needed]

This approximation is sometimes known as the law of rare events,[44]:5since each of the n individual Bernoulli events rarely occurs. The name may be misleading because the total count of success events in a Poisson process need not be rare if the parameter np is not small. For example, the number of telephone calls to a busy switchboard in one hour follows a Poisson distribution with the events appearing frequent to the operator, but they are rare from the point of view of the average member of the population who is very unlikely to make a call to that switchboard in that hour.

The word law is sometimes used as a synonym of probability distribution, and convergence in law means convergence in distribution. Accordingly, the Poisson distribution is sometimes called the 'law of small numbers' because it is the probability distribution of the number of occurrences of an event that happens rarely but has very many opportunities to happen. The Law of Small Numbers is a book by Ladislaus Bortkiewicz about the Poisson distribution, published in 1898.[13][45]

Poisson point process[edit]

The Poisson distribution arises as the number of points of a Poisson point process located in some finite region. More specifically, if D is some region space, for example Euclidean space Rd, for which |D|, the area, volume or, more generally, the Lebesgue measure of the region is finite, and if N(D) denotes the number of points in D, then

Other applications in science[edit]

In a Poisson process, the number of observed occurrences fluctuates about its mean λ with a standard deviation. These fluctuations are denoted as Poisson noise or (particularly in electronics) as shot noise.

The correlation of the mean and standard deviation in counting independent discrete occurrences is useful scientifically. By monitoring how the fluctuations vary with the mean signal, one can estimate the contribution of a single occurrence, even if that contribution is too small to be detected directly. For example, the charge e on an electron can be estimated by correlating the magnitude of an electric current with its shot noise. If N electrons pass a point in a given time t on the average, the meancurrent is ; since the current fluctuations should be of the order (i.e., the standard deviation of the Poisson process), the charge can be estimated from the ratio .[citation needed]

An everyday example is the graininess that appears as photographs are enlarged; the graininess is due to Poisson fluctuations in the number of reduced silver grains, not to the individual grains themselves. By correlating the graininess with the degree of enlargement, one can estimate the contribution of an individual grain (which is otherwise too small to be seen unaided).[citation needed] Many other molecular applications of Poisson noise have been developed, e.g., estimating the number density of receptor molecules in a cell membrane.

In Causal Set theory the discrete elements of spacetime follow a Poisson distribution in the volume.

Generating Poisson-distributed random variables[edit]

A simple algorithm to generate random Poisson-distributed numbers (pseudo-random number sampling) has been given by Knuth:[46]:137-138

The complexity is linear in the returned value k, which is λ on average. There are many other algorithms to improve this. Some are given in Ahrens & Dieter, see § References below.

For large values of λ, the value of L = e−λ may be so small that it is hard to represent. This can be solved by a change to the algorithm which uses an additional parameter STEP such that e−STEP does not underflow:[citation needed]

The choice of STEP depends on the threshold of overflow. For double precision floating point format, the threshold is near e700, so 500 shall be a safe STEP.

Other solutions for large values of λ include rejection sampling and using Gaussian approximation.

Inverse transform sampling is simple and efficient for small values of λ, and requires only one uniform random number u per sample. Cumulative probabilities are examined in turn until one exceeds u.

Parameter estimation[edit]

Maximum likelihood[edit]

Given a sample of n measured values , for i = 1, ..., n, we wish to estimate the value of the parameter λ of the Poisson population from which the sample was drawn. The maximum likelihood estimate is [48]

Since each observation has expectation λ so does this sample mean. Therefore, the maximum likelihood estimate is an unbiased estimator of λ. It is also an efficient estimator, i.e. its estimation variance achieves the Cramér–Rao lower bound (CRLB).[citation needed] Hence it is minimum-variance unbiased. Also it can be proven that the sum (and hence the sample mean as it is a one-to-one function of the sum) is a complete and sufficient statistic for λ.

To prove sufficiency we may use the factorization theorem. Consider partitioning the probability mass function of the joint Poisson distribution for the sample into two parts: one that depends solely on the sample (called ) and one that depends on the parameter and the sample only through the function . Then is a sufficient statistic for .

The first term, , depends only on . The second term, , depends on the sample only through . Thus, is sufficient.

To find the parameter λ that maximizes the probability function for the Poisson population, we can use the logarithm of the likelihood function:

We take the derivative of with respect to λ and compare it to zero:

Solving for λ gives a stationary point.

So λ is the average of the ki values. Obtaining the sign of the second derivative of L at the stationary point will determine what kind of extreme value λ is.

Evaluating the second derivative at the stationary point gives:

which is the negative of n times the reciprocal of the average of the ki. This expression is negative when the average is positive. If this is satisfied, then the stationary point maximizes the probability function.

For completeness, a family of distributions is said to be complete if and only if implies that for all . If the individual are iid , then . Knowing the distribution we want to investigate, it is easy to see that the statistic is complete.

For this equality to hold, must be 0. This follows from the fact that none of the other terms will be 0 for all in the sum and for all possible values of . Hence, for all implies that , and the statistic has been shown to be complete.

Confidence interval[edit]

The confidence interval for the mean of a Poisson distribution can be expressed using the relationship between the cumulative distribution functions of the Poisson and chi-squared distributions. The chi-squared distribution is itself closely related to the gamma distribution, and this leads to an alternative expression. Given an observation k from a Poisson distribution with mean μ, a confidence interval for μ with confidence level 1 – α is

or equivalently,

where is the quantile function (corresponding to a lower tail area p) of the chi-squared distribution with n degrees of freedom and is the quantile function of a Gamma distribution with shape parameter n and scale parameter 1.[9]:171[49] This interval is 'exact' in the sense that its coverage probability is never less than the nominal 1 – α.

When quantiles of the Gamma distribution are not available, an accurate approximation to this exact interval has been proposed (based on the Wilson–Hilferty transformation):[50]

where denotes the standard normal deviate with upper tail area α / 2.

For application of these formulae in the same context as above (given a sample of n measured values ki each drawn from a Poisson distribution with mean λ), one would set

calculate an interval for μ = nλ, and then derive the interval for λ.

Bayesian inference[edit]

In Bayesian inference, the conjugate prior for the rate parameter λ of the Poisson distribution is the gamma distribution.[51] Let

denote that λ is distributed according to the gamma densityg parameterized in terms of a shape parameterα and an inverse scale parameterβ:

Then, given the same sample of n measured values kias before, and a prior of Gamma(α, β), the posterior distribution is

The posterior mean E[λ] approaches the maximum likelihood estimate in the limit as , which follows immediately from the general expression of the mean of the gamma distribution.

The posterior predictive distribution for a single additional observation is a negative binomial distribution,[52]:53 sometimes called a Gamma–Poisson distribution.

Simultaneous estimation of multiple Poisson means[edit]

Suppose is a set of independent random variables from a set of Poisson distributions, each with a parameter , , and we would like to estimate these parameters. Then, Clevenson and Zidek show that under the normalized squared error loss , when , then, similar as in Stein's example for the Normal means, the MLE estimator is inadmissible. [53]

In this case, a family of minimax estimators is given for any and as[54]

Bivariate Poisson distribution[edit]

This distribution has been extended to the bivariate case.[55] The generating function for this distribution is

with

The marginal distributions are Poisson(θ1) and Poisson(θ2) and the correlation coefficient is limited to the range

A simple way to generate a bivariate Poisson distribution is to take three independent Poisson distributions with means and then set . The probability function of the bivariate Poisson distribution is

Computer software for the Poisson distribution[edit]

The Poisson distribution poses two different tasks for dedicated software libraries: Evaluating the distribution , and drawing random numbers according to that distribution.

Evaluating the Poisson distribution[edit]

Computing for given and is a trivial task that can be accomplished by using the standard definition of in terms of exponential, power, and factorial functions. However, the conventional definition of the Poisson distribution contains two terms that can easily overflow on computers: λk and k!. The fraction of λk to k! can also produce a rounding error that is very large compared to e−λ, and therefore give an erroneous result. For numerical stability the Poisson probability mass function should therefore be evaluated as

which is mathematically equivalent but numerically stable. The natural logarithm of the Gamma function can be obtained using the

lgamma function in the C (programming language) standard library (C99 version) or R (programming language), the gammaln function in MATLAB or SciPy, or the log_gamma function in Fortran 2008 and later.Some computing languages provide built-in functions to evaluate the Poisson distribution, namely

- R: function

dpois(x, lambda); - Excel: function

POISSON( x, mean, cumulative), with a flag to specify the cumulative distribution; - Mathematica: univariate Poisson distribution as

PoissonDistribution[],[56] bivariate Poisson distribution asMultivariatePoissonDistribution[,{ , }],.[57]

Random drawing from the Poisson distribution[edit]

The less trivial task is to draw random integers from the Poisson distribution with given .

Solutions are provided by:

- R: function

rpois(n, lambda); - GNU Scientific Library (GSL): function gsl_ran_poisson

See also[edit]

References[edit]

Citations[edit]

- ^ abHaight, Frank A. (1967), Handbook of the Poisson Distribution, New York, USA: John Wiley & Sons, ISBN978-0-471-33932-8

- ^Brooks, E. Bruce (2007-08-24), Statistics | The Poisson Distribution, Warring States Project, Umass.edu, retrieved 2014-04-18

- ^Koehrsen, William (2019-01-20), The Poisson Distribution and Poisson Process Explained, Towards Data Science, retrieved 2019-09-19

- ^Ugarte, Maria Dolores; Militino, Ana F.; Arnholt, Alan T. (2016), Probability and Statistics with R (Second ed.), Boca Raton, FL, USA: CRC Press, ISBN978-1-4665-0439-4

- ^Poisson, Siméon D. (1837), Probabilité des jugements en matière criminelle et en matière civile, précédées des règles générales du calcul des probabilitiés [Research on the Probability of Judgments in Criminal and Civil Matters] (in French), Paris, France: Bachelier

- ^de Moivre, Abraham (1711), 'De mensura sortis, seu, de probabilitate eventuum in ludis a casu fortuito pendentibus' [On the Measurement of Chance, or, on the Probability of Events in Games Depending Upon Furtious Chance], Philosophical Transactions of the Royal Society (in Latin), 27 (329): 213–264, doi:10.1098/rstl.1710.0018

- ^de Moivre, Abraham (1718), The Doctrine of Chances: Or, A Method of Calculating the Probability of Events in Play, London, Great Britain: W. Pearson

- ^de Moivre, Abraham (1721), 'Of the Laws of Chance', in Motte, Benjamin (ed.), The Philosophical Transactions from the Year MDCC (where Mr. Lowthorp Ends) to the Year MDCCXX. Abridg'd, and Dispos'd Under General Heads (in Latin), Vol. I, London, Great Britain: R. Wilkin, R. Robinson, S. Ballard, W. and J. Innys, and J. Osborn, p. 190–219

- ^ abcdefghiJohnson, N. L.; Kotz, S.; Kemp, A. W. (1993), Univariate Discrete Distributions (2nd ed.), New York, NJ, USA: John Wiley, ISBN0-471-54897-9

- ^Stigler, Stephen M. (1982), 'Poisson on the Poisson Distribution', Statistics & Probability Letters, 1 (1): 33–35, doi:10.1016/0167-7152(82)90010-4

- ^Hald, Anders; de Moivre, Abraham; McClintock, Bruce (1984), 'A. de Moivre: 'De Mensura Sortis' or 'On the Measurement of Chance'', International Statistical Review / Revue Internationale de Statistique, 52 (3): 229–262, doi:10.2307/1403045, JSTOR1403045

- ^Newcomb, Simon (1860), 'Notes on the theory of probabilities', The Mathematical Monthly, 2 (4): 134–140

- ^ abcvon Bortkiewicz, Ladislaus (1898), Das Gesetz der kleinen Zahlen [The law of small numbers] (in German), Leipzig, Germany: B.G. Teubner, p. On page 1, Bortkiewicz presents the Poisson distribution. On pages 23–25, Bortkiewicz presents his analysis of '4. Beispiel: Die durch Schlag eines Pferdes im preussischen Heere Getöteten.' (4. Example: Those killed in the Prussian army by a horse's kick.)

- ^Yates, Roy D.; Goodman, David J. (2014), Probability and Stochastic Processes: A Friendly Introduction for Electrical and Computer Engineers (2nd ed.), Hoboken, USA: Wiley, ISBN978-0-471-45259-1

- ^For the proof, see :Proof wiki: expectation and Proof wiki: variance

- ^Helske, Jouni (2017). 'KFAS: Exponential family state space models in R'. arXiv:1612.01907 [stat.CO].

- ^Choi, KP (1994), 'On the medians of Gamma distributions and an equation of Ramanujan', Proc Amer Math Soc, 121 (1): 245–251, doi:10.2307/2160389, JSTOR2160389

- ^Riordan, John (1937), 'Moment Recurrence Relations for Binomial, Poisson and Hypergeometric Frequency Distributions'(PDF), Annals of Mathematical Statistics, 8 (2): 103–111, doi:10.1214/aoms/1177732430, JSTOR2957598

- ^Jagadeesan, Meena (2017). 'Simple analysis of sparse, sign-consistent JL'. arXiv:1708.02966 [cs.DS].

- ^Lehmann, Erich Leo (1986), Testing Statistical Hypotheses (second ed.), New York, NJ, USA: Springer Verlag, ISBN978-0-387-94919-2

- ^Raikov, Dmitry (1937), 'On the decomposition of Poisson laws', Comptes Rendus de l'Académie des Sciences de l'URSS, 14: 9–11

- ^von Mises, Richard (1964), Mathematical Theory of Probability and Statistics, New York, NJ, USA: Academic Press, doi:10.1016/C2013-0-12460-9, ISBN978-1-4832-3213-3

- ^Laha, Radha G.; Rohatgi, Vijay K. (1979), Probability Theory, New York, NJ, USA: John Wiley & Sons, ISBN978-0-471-03262-5

- ^ abMitzenmacher, Michael; Upfal, Eli (2005), Probability and Computing: Randomized Algorithms and Probabilistic Analysis, Cambridge, UK: Cambridge University Press, ISBN978-0-521-83540-4

- ^Short, Michael (2013), 'Improved Inequalities for the Poisson and Binomial Distribution and Upper Tail Quantile Functions', ISRN Probability and Statistics, 2013: 412958, doi:10.1155/2013/412958

- ^Kamath, Govinda M.; Şaşoğlu, Eren; Tse, David (2015), 'Optimal Haplotype Assembly from High-Throughput Mate-Pair Reads', 2015 IEEE International Symposium on Information Theory (ISIT), 14-19 June, Hong Kong, China, pp. 914–918, arXiv:1502.01975, doi:10.1109/ISIT.2015.7282588

- ^'1.7.7 - Relationship between the Multinomial and Poisson | STAT 504'.

- ^Prins, Jack (2012), '6.3.3.1. Counts Control Charts', e-Handbook of Statistical Methods, NIST/SEMATECH, retrieved 2019-09-20

- ^Huiming, Zhang; Yunxiao Liu; Bo Li (2014). 'Notes on discrete compound Poisson model with applications to risk theory'. Insurance: Mathematics and Economics. 59: 325–336. doi:10.1016/j.insmatheco.2014.09.012.

- ^Huiming, Zhang; Bo Li (2016). 'Characterizations of discrete compound Poisson distributions'. Communications in Statistics - Theory and Methods. 45 (22): 6789–6802. doi:10.1080/03610926.2014.901375.

- ^McCullagh, Peter; Nelder, John (1989), Generalized Linear Models, London, UK: Chapman and Hall, ISBN978-0-412-31760-6

- ^Ross, Sheldon M. (2010), Introduction to Probability Models (tenth ed.), Boston, MA, USA: Academic Press, ISBN978-0-12-375686-2

- ^Rasch, Georg (1963), 'The Poisson Process as a Model for a Diversity of Behavioural Phenomena'(PDF), 17th International Congress of Psychology, 2, Washington, DC, USA, August 20th - 26th, 1963: American Psychological Association, doi:10.1037/e685262012-108

- ^Flory, Paul J. (1940), 'Molecular Size Distribution in Ethylene Oxide Polymers', Journal of the American Chemical Society, 62 (6): 1561–1565, doi:10.1021/ja01863a066

- ^Lomnitz, Cinna (1994), Fundamentals of Earthquake Prediction, New York: John Wiley & Sons, ISBN0-471-57419-8, OCLC647404423

- ^Student (1907), 'On the Error of Counting with a Haemacytometer', Biometrika, 5 (3): 351–360, doi:10.2307/2331633, JSTOR2331633

- ^Boland, Philip J. (1984), 'A Biographical Glimpse of William Sealy Gosset', The American Statistician, 38 (3): 179–183, doi:10.1080/00031305.1984.10483195, JSTOR2683648

- ^Erlang, Agner K. (1909), 'Sandsynlighedsregning og Telefonsamtaler' [Probability Calculation and Telephone Conversations], Nyt Tidsskrift for Matematik (in Danish), 20 (B): 33–39, JSTOR24528622

- ^Hornby, Dave (2014), Football Prediction Model: Poisson Distribution, Sports Betting Online, retrieved 2014-09-19

- ^Koyama, Kento; Hokunan, Hidekazu; Hasegawa, Mayumi; Kawamura, Shuso; Koseki, Shigenobu (2016), 'Do bacterial cell numbers follow a theoretical Poisson distribution? Comparison of experimentally obtained numbers of single cells with random number generation via computer simulation', Food Microbiology, 60: 49–53, doi:10.1016/j.fm.2016.05.019

- ^Clarke, R. D. (1946), 'An application of the Poisson distribution'(PDF), Journal of the Institute of Actuaries, 72 (3): 481, doi:10.1017/S0020268100035435

- ^Gallagher, Patrick X. (1976), 'On the distribution of primes in short intervals', Mathematika, 23 (1): 4–9, doi:10.1112/s0025579300016442

- ^Hardy, Godfrey H.; Littlewood, John E. (1923), 'On some problems of 'partitio numerorum' III: On the expression of a number as a sum of primes', Acta Math., 44: 1–70, doi:10.1007/BF02403921

- ^Cameron, A. Colin; Trivedi, Pravin K. (1998), Regression Analysis of Count Data, Cambridge, UK: Cambridge University Press, ISBN978-0-521-63567-7

- ^Edgeworth, Francis Y. (1913), 'On the use of the theory of probabilities in statistics relating to society', Journal of the Royal Statistical Society, 76 (2): 165–193, doi:10.2307/2340091, JSTOR2340091

- ^Knuth, Donald Ervin (1997), Seminumerical Algorithms, The Art of Computer Programming, 2 (3rd ed.), Addison Wesley, ISBN978-0-201-89684-8

- ^Devroye, Luc (1986), 'Discrete Univariate Distributions'(PDF), Non-Uniform Random Variate Generation, New York, NJ, USA: Springer-Verlag, pp. 485–553, doi:10.1007/978-1-4613-8643-8_10

- ^Paszek, Ewa. 'Maximum Likelihood Estimation – Examples'.

- ^Garwood, Frank (1936), 'Fiducial Limits for the Poisson Distribution', Biometrika, 28 (3/4): 437–442, doi:10.1093/biomet/28.3-4.437, JSTOR2333958

- ^Breslow, Norman E.; Day, Nick E. (1987), Statistical Methods in Cancer Research: Volume 2—The Design and Analysis of Cohort Studies, Lyon, France: International Agency for Research on Cancer, ISBN978-92-832-0182-3

- ^Fink, Daniel (1997), A Compendium of Conjugate Priors

- ^Gelman; Carlin, John B.; Stern, Hal S.; Rubin, Donald B. (2003), Bayesian Data Analysis (2nd ed.), Boca Raton, FL, USA: Chapman & Hall/CRC, ISBN1-58488-388-X

- ^Clevenson, M. Lawrence; Zidek, James V. (1975), 'Simultaneous Estimation of the Means of Independent Poisson Laws', Journal of the American Statistical Association, 70 (351): 698–705, doi:10.1080/01621459.1975.10482497, JSTOR2285958

- ^Berger, James O. (1985), Statistical Decision Theory and Bayesian Analysis (2nd ed.), New York, NJ, USA: Springer-Verlag, doi:10.1007/978-1-4757-4286-2, ISBN978-0-387-96098-2

- ^Loukas, Sotirios; Kemp, C. David (1986), 'The Index of Dispersion Test for the Bivariate Poisson Distribution', Biometrics, 42 (4): 941–948, doi:10.2307/2530708, JSTOR2530708

- ^'Wolfram Language: PoissonDistribution reference page'. wolfram.com. Retrieved 2016-04-08.

- ^'Wolfram Language: MultivariatePoissonDistribution reference page'. wolfram.com. Retrieved 2016-04-08.

Sources[edit]

- Ahrens, Joachim H.; Dieter, Ulrich (1974), 'Computer Methods for Sampling from Gamma, Beta, Poisson and Binomial Distributions', Computing, 12 (3): 223–246, doi:10.1007/BF02293108

- Ahrens, Joachim H.; Dieter, Ulrich (1982), 'Computer Generation of Poisson Deviates', ACM Transactions on Mathematical Software, 8 (2): 163–179, doi:10.1145/355993.355997

- Evans, Ronald J.; Boersma, J.; Blachman, N. M.; Jagers, A. A. (1988), 'The Entropy of a Poisson Distribution: Problem 87-6', SIAM Review, 30 (2): 314–317, doi:10.1137/1030059

Further reading[edit]

- Shanmugam, Ramalingam (2013). 'Informatics about fear to report rapes using bumped-up Poisson model'. American Journal of Biostatistics. 3 (1): 17–29. doi:10.3844/amjbsp.2013.17.29.

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Poisson_distribution&oldid=920192459'

In probability, statistics and related fields, a Poisson point process is a type of randommathematical object that consists of points randomly located on a mathematical space.[1] The Poisson point process is often called simply the Poisson process, but it is also called a Poisson random measure, Poisson random point field or Poisson point field. This point process has convenient mathematical properties,[2] which has led to it being frequently defined in Euclidean space and used as a mathematical model for seemingly random processes in numerous disciplines such as astronomy,[3]biology,[4]ecology,[5]geology,[6]seismology,[7]physics,[8]economics,[9]image processing,[10] and telecommunications.[11][12]

The process is named after FrenchmathematicianSiméon Denis Poisson despite Poisson never having studied the process. Its name derives from the fact that if a collection of random points in some space forms a Poisson process, then the number of points in a region of finite size is a random variable with a Poisson distribution. The process was discovered independently and repeatedly in several settings, including experiments on radioactive decay, telephone call arrivals and insurance mathematics.[13][14]

The Poisson point process is often defined on the real line, where it can be considered as a stochastic process. In this setting, it is used, for example, in queueing theory[15] to model random events, such as the arrival of customers at a store, phone calls at an exchange or occurrence of earthquakes, distributed in time. In the plane, the point process, also known as a spatial Poisson process,[16] can represent the locations of scattered objects such as transmitters in a wireless network,[11][17][18][19]particles colliding into a detector, or trees in a forest.[20] In this setting, the process is often used in mathematical models and in the related fields of spatial point processes,[21]stochastic geometry,[1]spatial statistics[21][22] and continuum percolation theory.[23] The Poisson point process can be defined on more abstract spaces. Beyond applications, the Poisson point process is an object of mathematical study in its own right.[2] In all settings, the Poisson point process has the property that each point is stochastically independent to all the other points in the process, which is why it is sometimes called a purely or completely random process.[24] Despite its wide use as a stochastic model of phenomena representable as points, the inherent nature of the process implies that it does not adequately describe phenomena where there is sufficiently strong interaction between the points. This has inspired the proposal of other point processes, some of which are constructed with the Poisson point process, that seek to capture such interaction.[25]

The point process depends on a single mathematical object, which, depending on the context, may be a constant, a locally integrable function or, in more general settings, a Radon measure.[26] In the first case, the constant, known as the rate or intensity, is the average density of the points in the Poisson process located in some region of space. The resulting point process is called a homogeneous or stationary Poisson point process.[27] In the second case, the point process is called an inhomogeneous or nonhomogeneousPoisson point process, and the average density of points depend on the location of the underlying space of the Poisson point process.[28] The word point is often omitted,[29][2] but there are other Poisson processes of objects, which, instead of points, consist of more complicated mathematical objects such as lines and polygons, and such processes can be based on the Poisson point process.[30]

- 1Overview of definitions

- 2Homogeneous Poisson point process

- 2.2Interpreted as a point process on the real line

- 2.3Spatial Poisson point process

- 3Inhomogeneous Poisson point process

- 3.1Defined on the real line

- 4Simulation

- 4.1Step 1: Number of points

- 4.2Step 2: Positioning of points

- 6History

- 9Functionals and moment measures

- 10Avoidance function

- 11Point process operations

- 11.2Superposition

- 11.4Random displacement

- 11.5Mapping

- 12Approximations with Poisson point processes

- 14Generalizations of Poisson point processes

- 14.3Marked Poisson point process

- 17References

- 17.2General

Overview of definitions[edit]

The Poisson point process is one of the most studied and used point processes, in both the field of probability and in more applied disciplines concerning random phenomena,[31][32] due to its convenient properties as a mathematical model as well as being mathematically interesting.[2] Depending on the setting, the process has several equivalent definitions[33] as well as definitions of varying generality owing to its many applications and characterizations.[34]

A Poisson point process is defined on some underlying mathematical space, called a carrier space,[35][36] or state space, though the latter term has a different meaning[a] in the context of stochastic processes. The Poisson point process can be defined, studied and used in one dimension, for example, on the real line, where it can be interpreted as a counting process or part of a queueing model;[41][42] in higher dimensions such as the plane where it plays a role in stochastic geometry[1] and spatial statistics;[43] or on more general mathematical spaces.[44] Consequently, the notation, terminology and level of mathematical rigour used to define and study the Poisson point process and points processes in general vary according to the context.[45] Despite all this, the Poisson point process has two key properties.

Poisson distribution of point counts[edit]

A Poisson point process is characterized via the Poisson distribution. The Poisson distribution is the probability distribution of a random variable (called a Poisson random variable) such that the probability that equals is given by:

where denotes factorial and the parameter determines the shape of the distribution. (In fact, equals the expected value of .)

By definition, a Poisson point process has the property that the number of points in a bounded region of its carrier space is a Poisson random variable.[46]

Complete independence[edit]

Consider a collection of disjoint and bounded subregions of the underlying space. By definition, the number of points of a Poisson point process in each bounded subregion will be completely independent of all the others.

This property is known under several names such as complete randomness, complete independence,[47] or independent scattering[48][49] and is common to all Poisson point processes. In other words, there is a lack of interaction between different regions and the points in general,[50] which motivates the Poisson process being sometimes called a purely or completely random process.[47]

Different settings[edit]

In all settings where the Poisson point process is used, the key properties—the Poisson property and the independence property—play an essential role.[26][46] The two properties are not logically independent; indeed, independence implies the Poisson distribution of point counts, but not the converse.[b]

Homogeneous Poisson point process[edit]

If a Poisson point process has a parameter of the form , where is Lebesgue measure (that is, it assigns length, area, or volume to sets) and is a constant, then the point process is called a homogeneous or stationary Poisson point process. The parameter, called rate or intensity, is related to the expected (or average) number of Poisson points existing in some bounded region,[51][52] where rate is usually used when the underlying space has one dimension.[51] The parameter can be interpreted as the average number of points per some unit of extent such as length, area, volume, or time, depending on the underlying mathematical space, and it is also called the mean density or mean rate;[53] see Terminology.

Interpreted as a counting process[edit]

The homogeneous Poisson point process, when considered on the positive half-line, can be defined as a counting process, a type of stochastic process, which can be denoted as .[33][42] A counting process represents the total number of occurrences or events that have happened up to and including time . A counting process is a homogeneous Poisson counting process with rate if it has the following three properties:[33][42]

- has independent increments; and

- the number of events (or points) in any interval of length is a Poisson random variable with parameter (or mean) .

The last property implies:

In other words, the probability of the random variable being equal to is given by:

The Poisson counting process can also be defined by stating that the time differences between events of the counting process are exponential variables with mean .[54] The time differences between the events or arrivals are known as interarrival[55] or interoccurence times.[54]

Interpreted as a point process on the real line[edit]

Interpreted as a point process, a Poisson point process can be defined on the real line by considering the number of points of the process in the interval . For the homogeneous Poisson point process on the real line with parameter , the probability of this random number of points, written here as , being equal to some counting number is given by:[56]

For some positive integer , the homogeneous Poisson point process has the finite-dimensional distribution given by:[56]

where the real numbers .

In other words, is a Poisson random variable with mean , where . Furthermore, the number of points in any two disjoint intervals, say, and are independent of each other, and this extends to any finite number of disjoint intervals.[56] In the queueing theory context, one can consider a point existing (in an interval) as an event, but this is different to the word event in the probability theory sense.[c] It follows that is the expected number of arrivals that occur per unit of time.[42]

Key properties[edit]

The previous definition has two important features shared by Poisson point processes in general:[56][26]

- the number of arrivals in each finite interval has a Poisson distribution;

- the number of arrivals in disjoint intervals are independent random variables.

Furthermore, it has a third feature related to just the homogeneous Poisson point process:[57]

- the Poisson distribution of the number of arrivals in each interval only depends on the interval's length .

In other words, for any finite , the random variable is independent of , so it is also called a stationary Poisson process.[56]

Law of large numbers[edit]

The quantity can be interpreted as the expected or average number of points occurring in the interval , namely:

where denotes the expectation operator. In other words, the parameter of the Poisson process coincides with the density of points. Furthermore, the homogeneous Poisson point process adheres to its own form of the (strong) law of large numbers.[58] More specifically, with probability one:

where denotes the limit of a function, and is expected number of arrivals occurred per unit of time.

Memoryless property[edit]

The distance between two consecutive points of a point process on the real line will be an exponential random variable with parameter (or equivalently, mean ). This implies that the points have the memoryless property: the existence of one point existing in a finite interval does not affect the probability (distribution) of other points existing,[59][60] but this property has no natural equivalence when the Poisson process is defined on a space with higher dimensions.[61]

Orderliness and simplicity[edit]

A point process with stationary increments is sometimes said to be orderly[62] or regular if:[63]

where little-o notation is being used. A point process is called a simple point process when the probability of any of its two points coinciding in the same position, on the underlying space, is zero. For point processes in general on the real line, the property of orderliness implies that the process is simple,[64] which is the case for the homogeneous Poisson point process.[65]

Martingale characterization[edit]

On the real line, the homogeneous Poisson point process has a connection to the theory of martingales via the following characterization: a point process is the homogeneous Poisson point process if and only if

is a martingale.[66]

Relationship to other processes[edit]

On the real line, the Poisson process is a type of continuous-time Markov process known as a birth-death process (with just births and zero deaths) and is called a pure[67] or simple birth process.[68] More complicated processes with the Markov property, such as Markov arrival processes, have been defined where the Poisson process is a special case.[54]

Restricted to the half-line[edit]

If the homogeneous Poisson process is considered just on the half-line , which can be the case when represents time[33] then the resulting process is not truly invariant under translation.[61] In that case the Poisson process is no longer stationary, according to some definitions of stationarity.[27]

Applications[edit]

There have been many applications of the homogeneous Poisson process on the real line in an attempt to model seemingly random and independent events occurring. It has a fundamental role in queueing theory, which is the probability field of developing suitable stochastic models to represent the random arrival and departure of certain phenomena.[15][54] For example, customers arriving and being served or phone calls arriving at a phone exchange can be both studied with techniques from queueing theory.

Generalizations[edit]

The homogeneous Poisson process on the real line is considered one of the simplest stochastic processes for counting random numbers of points.[69][70] This process can be generalized in a number of ways. One possible generalization is to extend the distribution of interarrival times from the exponential distribution to other distributions, which introduces the stochastic process known as a renewal process. Another generalization is to define the Poisson point process on higher dimensional spaces such as the plane.[71]

Spatial Poisson point process[edit]

A spatial Poisson process is a Poisson point process defined in the plane .[66][72] For its mathematical definition, one first considers a bounded, open or closed (or more precisely, Borel measurable) region of the plane. The number of points of a point process existing in this region is a random variable, denoted by . If the points belong to a homogeneous Poisson process with parameter , then the probability of points existing in is given by:

where denotes the area of .

For some finite integer , we can give the finite-dimensional distribution of the homogeneous Poisson point process by first considering a collection of disjoint, bounded Borel (measurable) sets . The number of points of the point process existing in can be written as . Then the homogeneous Poisson point process with parameter has the finite-dimensional distribution:[73]

Applications[edit]

According to one statistical study, the positions of cellular or mobile phone base stations in the Australian city Sydney, pictured above, resemble a realization of a homogeneous Poisson point process, while in many other cities around the world they do not and other point processes are required.[74]

The spatial Poisson point process features prominently in spatial statistics,[21][22]stochastic geometry, and continuum percolation theory.[23] This point process is applied in various physical sciences such as a model developed for alpha particles being detected. In recent years, it has been frequently used to model seemingly disordered spatial configurations of certain wireless communication networks.[17][18][19] For example, models for cellular or mobile phone networks have been developed where it is assumed the phone network transmitters, known as base stations, are positioned according to a homogeneous Poisson point process.

Defined in higher dimensions[edit]

The previous homogeneous Poisson point process immediately extends to higher dimensions by replacing the notion of area with (high dimensional) volume. For some bounded region of Euclidean space , if the points form a homogeneous Poisson process with parameter , then the probability of points existing in is given by:

where now denotes the -dimensional volume of . Furthermore, for a collection of disjoint, bounded Borel sets , let denote the number of points of existing in . Then the corresponding homogeneous Poisson point process with parameter has the finite-dimensional distribution:[75]

Homogeneous Poisson point processes do not depend on the position of the underlying space through its parameter , which implies it is both a stationary process (invariant to translation) and an isotropic (invariant to rotation) stochastic process.[27] Similarly to the one-dimensional case, the homogeneous point process is restricted to some bounded subset of , then depending on some definitions of stationarity, the process is no longer stationary.[27][61]

Points are uniformly distributed[edit]

If the homogeneous point process is defined on the real line as a mathematical model for occurrences of some phenomenon, then it has the characteristic that the positions of these occurrences or events on the real line (often interpreted as time) will be uniformly distributed. More specifically, if an event occurs (according to this process) in an interval where , then its location will be a uniform random variable defined on that interval.[73] Furthermore, the homogeneous point process is sometimes called the uniform Poisson point process (see Terminology). This uniformity property extends to higher dimensions in the Cartesian coordinate, but not in, for example, polar coordinates.[76][77]

Inhomogeneous Poisson point process[edit]

Graph of an inhomogeneous Poisson point process on the real line. The events are marked with black crosses, the time-dependent rate is given by the function marked red.

The inhomogeneous or nonhomogeneousPoisson point process (see Terminology) is a Poisson point process with a Poisson parameter set as some location-dependent function in the underlying space on which the Poisson process is defined. For Euclidean space , this is achieved by introducing a locally integrable positive function , where is a -dimensional point located in , such that for any bounded region the (-dimensional) volume integral of over region is finite. In other words, if this integral, denoted by , is:[52]

where is a (-dimensional) volume element,[d] then for any collection of disjoint bounded Borel measurable sets , an inhomogeneous Poisson process with (intensity) function has the finite-dimensional distribution:[75]

Furthermore, has the interpretation of being the expected number of points of the Poisson process located in the bounded region , namely

Defined on the real line[edit]

On the real line, the inhomogeneous or non-homogeneous Poisson point process has mean measure given by a one-dimensional integral. For two real numbers and , where , denote by the number points of an inhomogeneous Poisson process with intensity function occurring in the interval . The probability of points existing in the above interval is given by:

where the mean or intensity measure is:

which means that the random variable is a Poisson random variable with mean .

A feature of the one-dimension setting, is that an inhomogeneous Poisson process can be transformed into a homogeneous by a monotone transformation or mapping, which is achieved with the inverse of .[78][79]

Counting process interpretation[edit]

The inhomogeneous Poisson point process, when considered on the positive half-line, is also sometimes defined as a counting process. With this interpretation, the process, which is sometimes written as , represents the total number of occurrences or events that have happened up to and including time . A counting process is said to be an inhomogeneous Poisson counting process if it has the four properties:[42][80]

- has independent increments;

- and

where is asymptotic or little-o notation for as .In the case of point processes with refractoriness (e.g., neural spike trains) a stronger version of property 4 applies:[81].

The above properties imply that is a Poisson random variable with the parameter (or mean)

which implies

Spatial Poisson process[edit]

An inhomogeneous Poisson process defined in the plane is called a spatial Poisson process[16] It is defined with intensity function and its intensity measure is obtained performing an surface integral of its intensity function over some region.[20][82] For example, its intensity function (as a function of Cartesian coordinates and ) can be

so the corresponding intensity measure is given by the surface integral

where is some bounded region in the plane .

In higher dimensions[edit]

In the plane, corresponds to an surface integral while in the integral becomes a (-dimensional) volume integral.

Applications[edit]

When the real line is interpreted as time, the inhomogeneous process is used in the fields of counting processes and in queueing theory.[80][83] Examples of phenomena which have been represented by or appear as an inhomogeneous Poisson point process include:

- Goals being scored in a soccer game.[84]

- Defects in a circuit board [85]

In the plane, the Poisson point process is important in the related disciplines of stochastic geometry [1][43] and spatial statistics.[21][22] The intensity measure of this point process is dependent on the location of underlying space, which means it can be used to model phenomena with a density that varies over some region. In other words, the phenomena can be represented as points that have a location-dependent density.[20] This processes has been used in various disciplines and uses include the study of salmon and sea lice in the oceans,[86] forestry,[5] and search problems.[87]

Interpretation of the intensity function[edit]

The Poisson intensity function has an interpretation, considered intuitive,[20] with the volume element in the infinitesimal sense: is the infinitesimal probability of a point of a Poisson point process existing in a region of space with volume located at .[20]

For example, given a homogeneous Poisson point process on the real line, the probability of finding a single point of the process in a small interval of width is approximately . In fact, such intuition is how the Poisson point process is sometimes introduced and its distribution derived.[88][50][89]

Simple point process[edit]

If a Poisson point process has an intensity measure that is a locally finite and diffuse (or non-atomic), then it is a simple point process. For a simple point process, the probability of a point existing at a single point or location in the underlying (state) space is either zero or one. This implies that, with probability one, no two (or more) points of a Poisson point process coincide in location in the underlying space.[90][18][91]

Simulation[edit]

Simulating a Poisson point process on a computer is usually done in a bounded region of space, known as a simulation window, and requires two steps: appropriately creating a random number of points and then suitably placing the points in a random manner. Both these two steps depend on the specific Poisson point process that is being simulated.[92][93]

Step 1: Number of points[edit]

The number of points in the window, denoted here by , needs to be simulated, which is done by using a (pseudo)-random number generating function capable of simulating Poisson random variables.

Homogeneous case[edit]

For the homogeneous case with the constant , the mean of the Poisson random variable is set to where is the length, area or (-dimensional) volume of .

Inhomogeneous case[edit]

For the inhomogeneous case, is replaced with the (-dimensional) volume integral

Step 2: Positioning of points[edit]

The second stage requires randomly placing the points in the window .

Homogeneous case[edit]

For the homogeneous case in one dimension, all points are uniformly and independently placed in the window or interval . For higher dimensions in a Cartesian coordinate system, each coordinate is uniformly and independently placed in the window . If the window is not a subspace of Cartesian space (for example, inside a unit sphere or on the surface of a unit sphere), then the points will not be uniformly placed in , and suitable change of coordinates (from Cartesian) are needed.[92]

Inhomogeneous case[edit]

For the inhomogeneous, a couple of different methods can be used depending on the nature of the intensity function .[92] If the intensity function is sufficiently simple, then independent and random non-uniform (Cartesian or other) coordinates of the points can be generated. For example, simulating a Poisson point process on a circular window can be done for an isotropic intensity function (in polar coordinates and ), implying it is rotationally variant or independent of but dependent on , by a change of variable in if the intensity function is sufficiently simple.[92]

For more complicated intensity functions, one can use an acceptance-rejection method, which consists of using (or 'accepting') only certain random points and not using (or 'rejecting') the other points, based on the ratio:[94]

where is the point under consideration for acceptance or rejection.

General Poisson point process[edit]

The Poisson point process can be further generalized to what is sometimes known as the general Poisson point process[20][95] or general Poisson process[82] by using a Radon measure , which is locally-finite measure. In general, this Radon measure can be atomic, which means multiple points of the Poisson point process can exist in the same location of the underlying space. In this situation, the number of points at is a Poisson random variable with mean .[95] But sometimes the converse is assumed, so the Radon measure is diffuse or non-atomic.[20]

A point process is a general Poisson point process with intensity if it has the two following properties:[20]

- the number of points in a bounded Borel set is a Poisson random variable with mean . In other words, denote the total number of points located in by , then the probability of random variable being equal to is given by:

- the number of points in disjoint Borel sets forms independent random variables.

The Radon measure maintains its previous interpretation of being the expected number of points of located in the bounded region , namely

Furthermore, if is absolutely continuous such that it has a density (which is the Radon–Nikodym density or derivative) with respect to the Lebesgue measure, then for all Borel sets it can be written as:

where the density is known, among other terms, as the intensity function.

History[edit]

Poisson distribution[edit]

Despite its name, the Poisson point process was neither discovered nor studied by the French mathematician Siméon Denis Poisson; the name is cited as an example of Stigler's law.[13][14] The name stems from its inherent relation to the Poisson distribution, derived by Poisson as a limiting case of the binomial distribution.[96] This describes the probability of the sum of Bernoulli trials with probability , often likened to the number of heads (or tails) after biased flips of a coin with the probability of a head (or tail) occurring being . For some positive constant , as increases towards infinity and decreases towards zero such that the product is fixed, the Poisson distribution more closely approximates that of the binomial.[97]

Poisson derived the Poisson distribution, published in 1841, by examining the binomial distribution in the limit of (to zero) and (to infinity). It only appears once in all of Poisson's work,[98] and the result was not well known during his time. Over the following years a number of people used the distribution without citing Poisson, including Philipp Ludwig von Seidel and Ernst Abbe.[99][13] At the end of the 19th century, Ladislaus Bortkiewicz would study the distribution again in a different setting (citing Poisson), using the distribution with real data to study the number of deaths from horse kicks in the Prussian army.[96][100]

Discovery[edit]

There are a number of claims for early uses or discoveries of the Poisson point process.[13][14] For example, John Michell in 1767, a decade before Poisson was born, was interested in the probability a star being within a certain region of another star under the assumption that the stars were 'scattered by mere chance', and studied an example consisting of the six brightest stars in the Pleiades, without deriving the Poisson distribution. This work inspired Simon Newcomb to study the problem and to calculate the Poisson distribution as an approximation for the binomial distribution in 1860.[14]

At the beginning of the 20th century the Poisson process (in one dimension) would arise independently in different situations.[13][14] In Sweden 1903, Filip Lundberg published a thesis containing work, now considered fundamental and pioneering, where he proposed to model insurance claims with a homogeneous Poisson process.[101][102]

In Denmark in 1909 another discovery occurred when A.K. Erlang derived the Poisson distribution when developing a mathematical model for the number of incoming phone calls in a finite time interval. Erlang was not at the time aware of Poisson's earlier work and assumed that the number phone calls arriving in each interval of time were independent to each other. He then found the limiting case, which is effectively recasting the Poisson distribution as a limit of the binomial distribution.[13]

In 1910 Ernest Rutherford and Hans Geiger published experimental results on counting alpha particles. Their experimental work had mathematical contributions from Harry Bateman, who derived Poisson probabilities as a solution to a family of differential equations, though the solution had been derived earlier, resulting in the independent discovery of the Poisson process.[13] After this time there were many studies and applications of the Poisson process, but its early history is complicated, which has been explained by the various applications of the process in numerous fields by biologists, ecologists, engineers and various physical scientists.[13]

Early applications[edit]

The years after 1909 led to a number of studies and applications of the Poisson point process, however, its early history is complex, which has been explained by the various applications of the process in numerous fields by biologists, ecologists, engineers and others working in the physical sciences. The early results were published in different languages and in different settings, with no standard terminology and notation used.[13] For example, in 1922 Swedishchemist and Nobel LaureateTheodor Svedberg proposed a model in which a spatial Poisson point process is the underlying process in order to study how plants are distributed in plant communities.[103] A number of mathematicians started studying the process in the early 1930s, and important contributions were made by Andrey Kolmogorov, William Feller and Aleksandr Khinchin,[13] among others.[104] In the field of teletraffic engineering, mathematicians and statisticians studied and used Poisson and other point processes.[105]

History of terms[edit]

The Swede Conny Palm in his 1943 dissertation studied the Poisson and other point processes in the one-dimensional setting by examining them in terms of the statistical or stochastic dependence between the points in time.[106][105] In his work exists the first known recorded use of the term point processes as Punktprozesse in German.[106][14]

It is believed [13] that William Feller was the first in print to refer to it as the Poisson process in a 1940 paper. Although the Swede Ove Lundberg used the term Poisson process in his 1940 PhD dissertation,[14] in which Feller was acknowledged as an influence,[107] it has been claimed that Feller coined the term before 1940.[97] It has been remarked that both Feller and Lundberg used the term as though it were well-known, implying it was already in spoken use by then.[14] Feller worked from 1936 to 1939 alongside Harald Cramér at Stockholm University, where Lundberg was a PhD student under Cramér who did not use the term Poisson process in a book by him, finished in 1936, but did in subsequent editions, which his has led to the speculation that the term Poisson process was coined sometime between 1936 and 1939 at the Stockholm University.[14]

Terminology[edit]

The terminology of point process theory in general has been criticized for being too varied.[14] In addition to the word point often being omitted,[71][2] the homogeneous Poisson (point) process is also called a stationary Poisson (point) process,[56] as well as uniform Poisson (point) process.[51] The inhomogeneous Poisson point process, as well as being called nonhomogeneous,[56] is also referred to as the non-stationary Poisson process.[80][108]

The term point process has been criticized, as the term process can suggest over time and space, so random point field,[109] resulting in the terms Poisson random point field or Poisson point field being also used.[110] A point process is considered, and sometimes called, a random counting measure,[111] hence the Poisson point process is also referred to as a Poisson random measure,[112] a term used in the study of Lévy processes,[112][113] but some choose to use the two terms for Poisson points processes defined on two different underlying spaces.[114]